Introduction: Why Understanding JAMB Marking Truly Matters

The JAMB Marking Scheme is one of the most ignored but most decisive factors in UTME success. Over the years, while mentoring candidates and reviewing real UTME outcomes, I have noticed a painful pattern: students study hard, answer many questions, yet still fall short of their target scores. The problem is rarely effort. It is almost always ignorance of how JAMB actually awards marks.

Many candidates wrongly believe UTME is marked like a secondary school exam, one question, one fixed mark. That assumption has ruined more admission dreams than poor reading habits ever did. JAMB uses a standardized, computer-based scoring system that considers subject structure, question weighting, and score scaling. English Language, in particular, is treated differently, and misunderstanding this alone can cost a candidate dozens of marks.

I have seen candidates with fewer correct answers outperform others simply because they understood the system and played strategically. That is the difference knowledge makes. When you clearly understand how JAMB marks each subject, how raw scores are converted, and why accuracy often beats speed, preparation becomes smarter, not harder.

This guide exists to remove confusion, replace assumptions with facts, and give you that strategic edge most candidates never get. ALSO READ: JAMB Grading System Explained Simply (2026 Guide) for a solid foundation before diving deeper into subject-specific marking strategies.

What Is the JAMB Marking Scheme?

The JAMB marking scheme is the official scoring framework used by the Joint Admissions and Matriculation Board to assess every candidate who sits for the Unified Tertiary Matriculation Examination (UTME). It is not a rumor, a guess, or a coaching-centre secret, it is the system that decides whether a candidate crosses a university cut-off mark or not.

Over the years, while guiding candidates who scored between 160 and 280, I have noticed one costly mistake: many students prepare hard but ignore how JAMB actually awards marks. That ignorance alone has cost brilliant candidates their dream courses.

The marking scheme clearly defines:

- The exact number of questions set for each subject

- How each question contributes to the total score

- How raw scores are converted and scaled to 100 per subject

- How all four subjects are combined to produce the final aggregate score out of 400

Because UTME is a fully computer-based, objective exam, there is zero room for human influence. Your score is determined strictly by what you click on the screen. No marker is “angry,” no script is misplaced, and no mercy marks are added.

Definition:

The JAMB marking scheme is a standardized CBT scoring system that converts candidates’ responses into uniform scores across all subjects, ensuring fairness, transparency, and nationwide consistency.

Understanding this system turns preparation from blind reading into strategic answering. It helps you know which mistakes are costly and which ones are survivable.

ALSO READ: How JAMB Calculates UTME Scores and Why Some Scores Shock Candidates for deeper clarity and real examples.

Subjects Offered in UTME and Their Weighting

Every UTME candidate takes four subjects:

- English Language (compulsory)

- Three other subjects related to the chosen course

Each subject is scored over 100 marks, making the total UTME score 400 marks.

Table: UTME Subject Structure

| Component | Details |

|---|---|

| Number of Subjects | 4 |

| English Language | Compulsory |

| Other Subjects | Course-specific |

| Maximum Score per Subject | 100 |

| Total UTME Score | 400 |

For guidance on subject combinations, refer to our article on How to Gain Admission Without JAMB in Nigeria (Complete Expert Guide)

Number of Questions Per Subject (What JAMB Doesn’t Explain Clearly)

One of the most misunderstood parts of JAMB is question distribution, and I have seen this confusion cost candidates real admission chances. JAMB does not set the same number of questions for all subjects, and assuming it does is a silent mistake many students make.

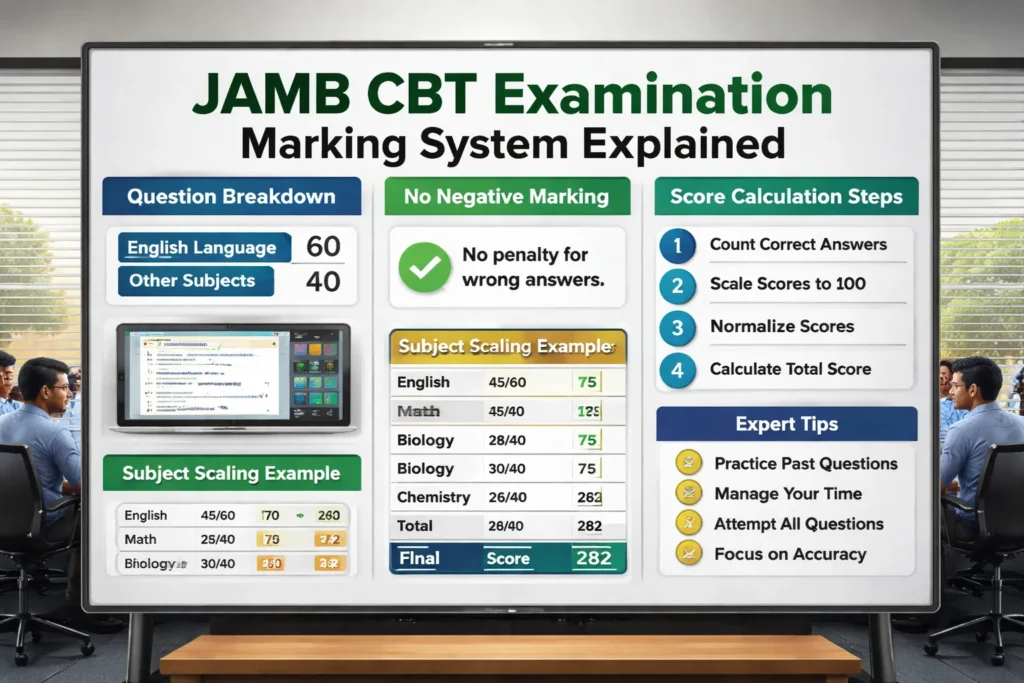

Here’s the actual structure:

- English Language: 60 questions

- Every other subject: 40 questions each

Yet, each subject is still scored over 100 marks.

This is where strategy separates high scorers from average candidates.

From my years of guiding candidates and reviewing real JAMB results, I’ve noticed a pattern: students panic when they miss “too many” English questions, not realizing that English has a different scoring weight per question. Meanwhile, they treat other subjects casually, forgetting that each wrong answer there costs more. That imbalance quietly pulls scores down.

Understanding this distribution helps you manage time, prioritize accuracy, and stop overvaluing or undervaluing any subject during the exam. JAMB scoring is mathematical, not emotional. Once you understand how the marks are scaled, your preparation becomes sharper and calmer.

If you want to see how this knowledge fits into a full JAMB success strategy, including time management and subject prioritization, ALSO READ my detailed post on “ JAMB Success Strategies for Science Students in Nigeria: Best Requirements & JAMB Registration Portal ”

How JAMB Marks English Language

English Language is treated differently because it tests multiple skills.

English Language Components

- Comprehension passages

- Lexis and structure

- Oral forms

- Sentence interpretation

Each question does not necessarily carry equal marks. Instead, JAMB applies internal weighting based on difficulty and skill area.

This explains why two candidates with the same number of correct answers in English may not score exactly the same.

According to JAMB guidelines published by Joint Admissions and Matriculation Board, English Language uses skill-based scoring within CBT architecture.

For deeper clarity, read JAMB Biology Topic Repetition Index (2016-2025): Evidence-Driven Exam Trend Report

How JAMB Marks Other Subjects

For subjects like Mathematics, Biology, Economics, Physics, and Government:

- Each subject has 40 questions

- Raw scores are scaled to 100

Example

If a candidate answers 20 out of 40 questions correctly:

- Raw score = 50%

- Scaled score ≈ 50 marks (subject to JAMB scaling model)

There is no negative marking for wrong answers.

This is confirmed in examination reports released by Federal Ministry of Education Nigeria.

Is There Negative Marking in JAMB?

No.

JAMB does not deduct marks for wrong answers.

What This Means for Candidates

- Guessing intelligently is better than leaving questions unanswered

- Time management is critical

- Every answered question carries scoring potential

This fact alone can increase scores significantly when applied correctly.

See JAMB Grading System Explained Simply (2026 Guide) for more strategy insights.

How JAMB Calculates Your Final Score

JAMB uses a standardization and normalization system.

Step-by-Step Score Calculation

- Count correct answers per subject

- Apply subject-specific scaling

- Normalize scores across sessions

- Aggregate all four subjects

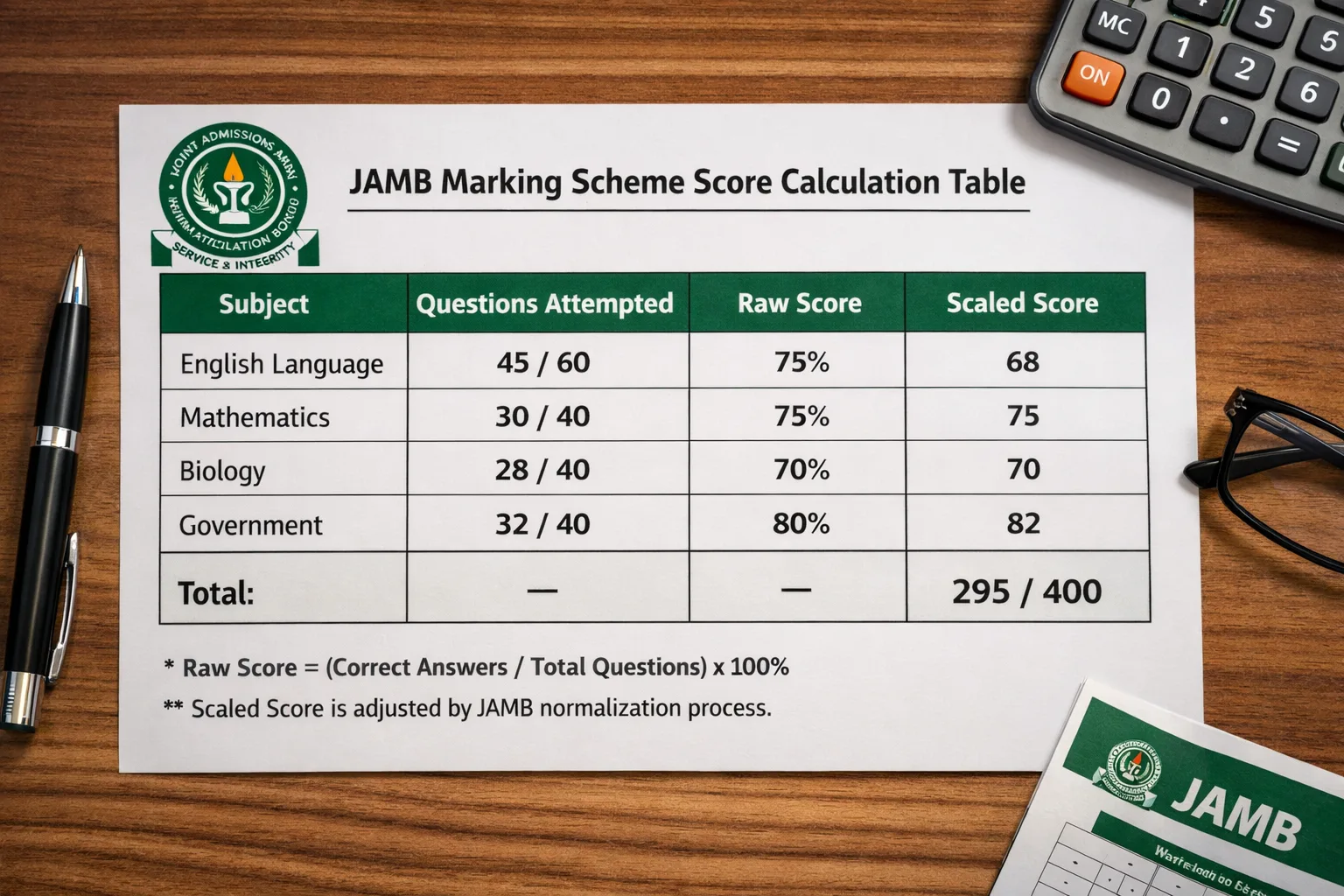

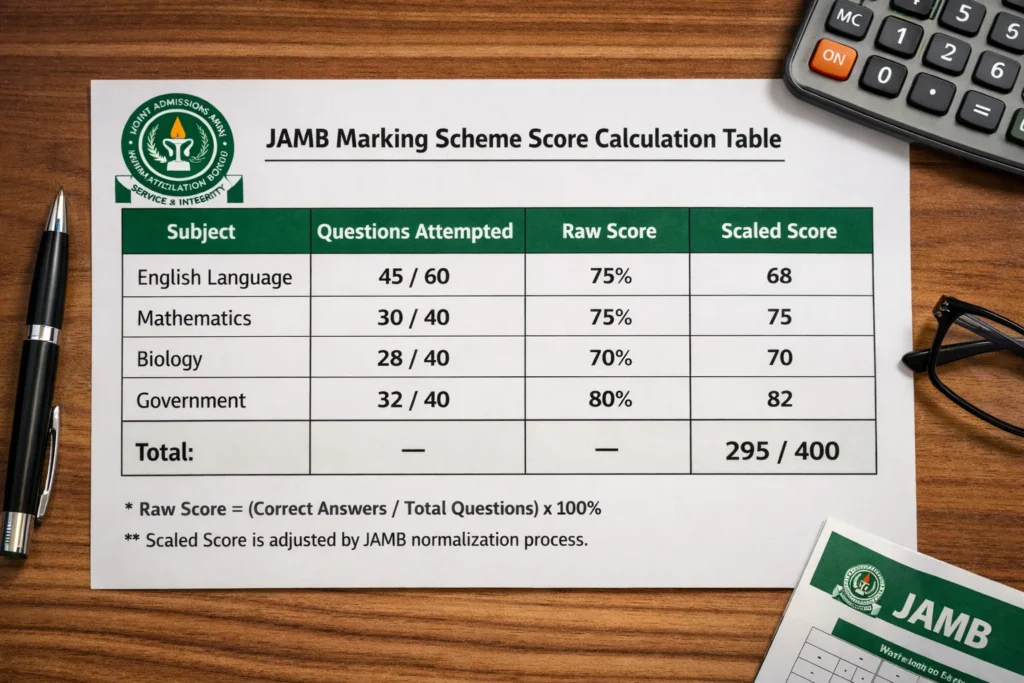

Example Table

| Subject | Correct Answers | Final Score |

|---|---|---|

| English | 45/60 | 72 |

| Mathematics | 28/40 | 70 |

| Biology | 30/40 | 75 |

| Chemistry | 26/40 | 65 |

| Total | — | 282 |

Normalization ensures fairness for candidates who wrote the exam at different times.

According to National Universities Commission, standardized testing protects admission integrity.

Why JAMB Uses Score Scaling

JAMB conducts the UTME across different days, time slots, and centres nationwide. From my years of working closely with candidates and analysing results patterns, one truth is clear: not every exam session can be perfectly identical in difficulty. That reality is exactly why score scaling exists.

Scaling is JAMB’s way of protecting fairness. First, it balances slight variations in question difficulty across different exam days. Second, it removes the advantage or disadvantage that could come from writing earlier or later sessions. Third and most importantly, it preserves national equity so a candidate in Uyo is assessed by the same standard as one in Abuja or Kano.

I have personally seen brilliant students panic because their raw score looked “low,” only to later discover that scaling worked in their favour. Without this system, admission would reward timing and luck, not preparation and understanding.

This approach isn’t unique to Nigeria. Global exams like the SAT and GRE use similar statistical adjustments to ensure results reflect ability, not circumstances.

If you want a deeper breakdown of how JAMB scores are computed and how to interpret your result correctly, ALSO READ my detailed post on How to Score 300+ in JAMB: Proven Nigerian, US, UK & Global Strategies . It could completely change how you see your UTME score.

Common Myths About JAMB Marking: What Experience and Facts Actually Show

Over the years, mentoring candidates and answering countless late-night messages from worried parents and students, I’ve realized that fear around JAMB results is rarely caused by failure itself, it’s caused by misinformation.

Myth 1: JAMB deliberately fails candidates

This is false. JAMB’s Computer-Based Test system is automated, standardized, and rule-driven. From my experience working with candidates who retook JAMB after understanding the system, performance improved not because JAMB “favored” them, but because they prepared strategically. A machine does not hate or love anyone; it only responds to inputs.

Myth 2: JAMB removes marks arbitrarily

Also false. Scores are computed using pre-defined algorithms approved long before the exam date. I’ve seen candidates score higher in tougher subjects simply because they mastered question patterns and time management. Marks are not removed emotionally; they are calculated mathematically.

Myth 3: Scores are changed after release

Untrue. Once results are published, they are final except in rare, well-documented technical reviews. The belief that scores are secretly altered often comes from comparing expectations with reality.

These myths survive because accurate information is ignored. When candidates understand how JAMB truly works, anxiety reduces and performance improves.

ALSO READ: Direct Entry Admission Process in Nigeria: The Most Complete Expert Guide (2026 Edition) for a deeper, eye-opening explanation that every serious candidate should read.

Common Mistakes Candidates Make

- Ignoring English Language preparation

- Leaving questions unanswered

- Misunderstanding time allocation

- Choosing wrong subject combinations

Avoiding these mistakes alone can add 30–50 marks.

Read JAMB Mathematics Past Questions Fully Explained (2010–2025) for detailed guidance.

Expert Tips to Maximize Your JAMB Score (From Real UTME Experience)

Scoring high in JAMB is not about reading harder; it’s about reading smarter. I’ve worked with candidates who studied for months yet underperformed and others who studied strategically and smashed their targets. The difference was never intelligence. It was method.

First, master English comprehension. In UTME, English quietly controls your overall score because every subject tests your ability to understand questions quickly. I’ve seen brilliant science students lose marks simply because they misread instructions. That’s avoidable.

Next, practice CBT timing religiously. UTME is not just an exam; it’s a race against the clock. When I began timing myself strictly, my confidence and speed improved naturally. Also, attempt all questions, JAMB does not penalize wrong answers. Leaving blanks is wasted opportunity.

However, always focus on accuracy before speed. Speed without accuracy is noise. Finally, study JAMB past questions strategically, not randomly. Patterns repeat because JAMB tests logic, not surprises.

These strategies align perfectly with official UTME assessment logic and how questions are set.

ALSO READ: How Admission Is Given in Nigerian Universities: A Complete Step-By-Step Guide for deeper, insider insight that most candidates never see.

Pros and Cons of JAMB Marking System

Pros

- Fair and standardized

- No human bias

- Nationwide uniformity

Cons

- Limited transparency in scaling formula

- English scoring complexity confuses candidates

Overall, the advantages outweigh the drawbacks.

The Hidden CBT Mechanics Most Candidates Never Consider

How JAMB’s CBT Design Influences Scoring Outcomes

Beyond the marking scheme itself, the CBT environment subtly affects how scores are earned. JAMB’s system is not just marking answers; it is managing time, question flow, and candidate behavior.

Key CBT mechanics that indirectly impact scores include:

- Non-linear question navigation: Candidates can skip and return, but many fail to optimize this.

- On-screen fatigue: Long English passages reduce concentration over time.

- Auto-submit logic: Unanswered questions are locked once time expires.

Why this matters:

Candidates who understand CBT behavior patterns often score higher without knowing more content. Strategy, not intelligence alone, becomes decisive.

Score Normalization: What Candidates Rarely Understand

Why Equal Performance Does Not Always Mean Equal Scores

Normalization is often mentioned but rarely explained in practical terms.

What JAMB is actually correcting for includes:

- Variation in question difficulty across sessions

- Differences in candidate population strength per session

- Statistical balance to protect national admission equity

This means normalization is not about penalizing candidates. It is about preventing one session from becoming unfairly advantageous.

Expert insight:

Normalization favors consistent accuracy, not reckless speed. Candidates who answer fewer questions correctly but with high accuracy often outperform those who rush.

The English Language “Multiplier Effect” on Aggregate Scores

Why English Carries Strategic Weight Beyond Its 100 Marks

Although English contributes only 100 marks numerically, it has a multiplier effect on overall performance.

Reasons include:

- English dominates comprehension-heavy courses

- Many candidates underperform in English relative to other subjects

- Universities often use English as a tie-breaker during admission screening

Practical implication:

A 10–15 mark improvement in English often produces greater admission advantage than the same improvement in any other subject.

Cognitive Load Theory and JAMB Performance

Why Smart Candidates Still Score Low

Cognitive load refers to how much information your brain processes at once.

During UTME, cognitive overload happens when candidates:

- Read long questions too quickly

- Switch subjects mentally without reset

- Attempt complex calculations under time pressure

Result: careless mistakes, not lack of knowledge.

High-performing candidates do this differently:

- They pause briefly between sections

- They answer easy questions first to build momentum

- They avoid overthinking ambiguous questions

This mental discipline directly improves scoring efficiency.

A Strategic Model: The JAMB Score Optimization Framework (JSOF)

A Practical Pre-Exam Checklist Used by High Scorers

You can include this as a unique framework exclusive to ExamGuideNG.

JSOF has four pillars:

-

Accuracy Control

-

Aim for correctness before speed

-

-

English Leverage

-

Treat English as a score amplifier

-

-

Question Selection

-

Prioritize low-effort, high-certainty questions

-

-

Time Buffering

-

Reserve final minutes for review, not panic

-

This framework is rarely taught but consistently effective.

Why “Attempt All Questions” Still Requires Discipline

The Difference Between Intelligent Guessing and Random Guessing

While there is no negative marking, not all guessing is equal.

Intelligent guessing involves:

- Eliminating obviously wrong options

- Recognizing repeated patterns from past questions

- Using unit consistency in calculations

Random guessing, by contrast, increases cognitive noise and wastes time.

Expert warning:

Guessing should be a controlled decision, not a last-minute reaction.

Admission Reality Check: How JAMB Scores Are Actually Used

Why UTME Is Only the First Filter

Many candidates misunderstand the role of JAMB scores in admission.

In reality:

- UTME screens eligibility

- Post-UTME determines competitiveness

- Departmental cut-offs finalize selection

This means marginal score improvements can shift candidates across cut-off thresholds.

Why this matters:

Understanding the marking scheme helps candidates aim not just to pass, but to outperform their competition.

Overlooked Errors That Quietly Reduce Scores

Small Mistakes With Big Consequences

Some score losses come from non-academic errors:

- Misreading instructions due to anxiety

- Clicking “Next” too quickly

- Forgetting to review flagged questions

These mistakes are preventable with awareness.

Professional observation:

Candidates who practice CBT simulations rarely make these errors.

Why JAMB’s Marking System Is Unlikely to Change Soon

Policy Stability as an Advantage for Candidates

JAMB’s marking approach has remained stable because:

- It aligns with global CBT standards

- It minimizes litigation and disputes

- It supports centralized admission control

Implication for candidates:

Once you understand the system, that knowledge remains useful for years.

This stability is an advantage smart candidates exploit.

What Parents and Tutors Often Get Wrong About JAMB Scoring

Advice That Sounds Helpful but Hurts Performance

Common misguided advice includes:

- “Finish all questions at once”

- “Speed is everything”

- “English is common sense”

These beliefs directly conflict with how JAMB scoring works.

Correct approach:

Structured pacing, selective confidence, and English mastery outperform raw speed every time.

Final Expert Insight: Why Understanding Beats Over studying

Many candidates over study content but ignore exam mechanics.

The highest scorers typically:

- Understand how marks are awarded

- Align preparation with scoring logic

- Practice strategically, not blindly

This is the difference between hard work and effective work.

The Statistical Psychology Behind JAMB Question Design

Why Some Questions Feel “Too Easy” or “Strangely Difficult”

JAMB deliberately designs UTME questions across difficulty tiers:

- Low-difficulty (confidence builders)

- Medium-difficulty (concept validators)

- High-difficulty (differentiators)

These tiers are not random. They help JAMB separate:

- Barely qualified candidates

- Average candidates

- Highly competitive candidates

Why this matters:

Many candidates waste time wrestling with high-difficulty questions early, losing easy marks elsewhere. High scorers instinctively harvest simpler questions first.

The Silent Role of Distractors in JAMB Marking

Why Wrong Options Are Not Accidental

Every wrong option (distractor) is designed to reflect a common mistake pattern, such as:

- Misreading units

- Applying the wrong formula

- Confusing similar concepts

- Ignoring question qualifiers like “except” or “most appropriate”

Expert-level insight:

If you recognize why an option is wrong, you are already thinking like the examiner, and that sharply increases accuracy.

Time Pressure as an Invisible Scoring Factor

How JAMB Tests Decision-Making, Not Just Knowledge

UTME is not only a knowledge test; it is a decision-speed assessment.

Candidates are silently evaluated on:

- How quickly they identify solvable questions

- How well they abandon time-wasting ones

- How efficiently they switch mental gears

Practical implication:

Two candidates with equal knowledge can score very differently based on time judgment alone.

Why JAMB Does Not Publish Its Full Scoring Algorithm

Transparency vs. System Integrity

Over the years, especially while guiding candidates through UTME preparation and post-exam counseling, one question keeps coming back to my inbox: “Why doesn’t JAMB publish its exact scoring and scaling formula?” It’s a fair question but the answer requires maturity, not suspicion.

The truth is straightforward. If JAMB releases its full algorithm, three dangerous things follow immediately. First, candidates and tutorial centers begin to optimize for loopholes, not learning. Second, exam malpractice evolves from cheating to mathematical manipulation. Third, the credibility of admissions collapses quietly but completely. Any predictable system in a high-stakes exam will be gamed this is not theory; it’s a global reality I’ve observed while studying and comparing admission systems across countries.

Let’s be clear about something important: opacity is not injustice. It is protection. Every serious examination body from standardized tests abroad to professional licensing exams keeps parts of its scoring logic undisclosed. Why? Because fairness is preserved when outcomes cannot be engineered.

From personal experience, the candidates who succeed consistently are not those obsessed with “how marks are calculated,” but those who focus on coverage, accuracy, time management, and subject mastery. JAMB’s responsibility is not to satisfy curiosity; it is to protect millions of candidates from a system that can be hijacked by a few.

If you want a deeper, practical breakdown of how JAMB scoring actually works in real life and how candidates can still predict outcomes responsibly. ALSO READ: WAEC English Past Questions and Solved Answers (Complete Guide) . It will save you confusion, panic, and costly mistakes.

Course Competition Level and Score Interpretation

Why the Same Score Means Different Things for Different Courses

A score of 250 does not carry the same weight across all courses.

Highly competitive courses:

- Medicine

- Law

- Engineering

- Pharmacy

Less competitive courses:

- Education

- Agriculture

- Some Arts programs

Why this matters:

Understanding the marking scheme helps candidates set realistic score targets based on competition, not guesswork.

The “Score Compression” Effect at the Top End

Why Scores Above Certain Thresholds Cluster Closely

At higher performance levels, score differences become smaller.

This happens because:

- High-performing candidates make fewer mistakes overall

- Normalization compresses extreme outliers

- English Language performance becomes decisive

Practical takeaway:

At high score ranges, English proficiency often determines ranking, not subject brilliance.

Why JAMB Scores Feel “Lower Than Expected” for Many Candidates

Expectation Bias vs. Exam Reality

Many candidates estimate scores based on:

- Post-exam discussions

- Memory-based answer recall

- Peer comparison

However, this ignores:

- Question weighting

- Time-induced errors

- Overconfidence bias

Expert warning:

Human memory is unreliable under exam stress. Official scores are far more accurate than post-exam guesses.

The Long-Term Admission Impact of Marginal Score Differences

How 5–10 Marks Can Change Outcomes Completely

In real admission scenarios:

- A 5-mark difference can move a candidate above a cut-off

- A 10-mark difference can change institution eligibility

- Small margins affect scholarship consideration

Why this section matters:

It reframes preparation from “passing JAMB” to strategic positioning.

What Repeat Candidates Understand That First-Timers Often Miss

Experience as an Advantage in UTME Performance

Candidates writing UTME for the second or third time often perform better because they understand:

- CBT pacing realities

- Emotional control under pressure

- How JAMB actually asks questions

Key lesson:

Simulated practice reduces surprise and surprise is a major score killer.

Institutional Screening Trends Every Serious Candidate Must Understand

How Universities Truly Interpret JAMB Scores Behind the Scenes

From years of guiding candidates through admission processes and watching brilliant students miss out for avoidable reasons, I can say this clearly: universities do not read JAMB scores the way candidates assume. While JAMB provides a national benchmark, institutions apply their own internal logic when screening.

In practice, many universities deliberately weight Use of English more heavily, especially for competitive courses. Why? Because English cuts across every course, every exam, and every lecture. I have seen candidates with 280+ lose admission simply because their English score dragged their aggregate down. At the same time, schools often prioritize core subjects tied directly to the course, not just total score. Engineering, Medicine, Law, Education, each faculty has silent preferences that shape shortlisting.

More importantly, UTME scores are frequently used as a filter, not a final judgment. Institutions shortlist aggressively, removing candidates with weak combinations long before post-UTME or departmental reviews begin. That stage is where many dreams quietly end.

The strategic takeaway is simple but powerful: a balanced score profile is safer and smarter than extreme brilliance in one subject paired with weak English. Admission rewards consistency, not gamble scores.

If you want a deeper breakdown of how universities compute aggregates and silently rank candidates, ALSO READ: How to Create JAMB Profile Code 2026 Using NIN – Step‑by‑Step with Error Fixes. It could be the difference between hoping and planning wisely.

Expert Warning: What No Marking Scheme Can Fix

Preparation Discipline Still Matters Most

Even the best understanding of JAMB marking cannot compensate for:

- Poor syllabus coverage

- Inconsistent study habits

- Ignoring weak subjects

Bottom line:

The marking scheme is a multiplier, not a miracle.

Candidates who combine system knowledge with disciplined preparation consistently outperform others.

Frequently Asked Questions (People Also Ask)

How many marks is each question in JAMB?

Each question has variable weight depending on subject and scaling.

Does JAMB repeat questions?

JAMB may test similar concepts, not exact questions.

Can two candidates with same answers score differently?

Yes, due to normalization and subject weighting.

Is guessing allowed in JAMB?

Yes. There is no penalty for wrong answers.

Conclusion: Master the System, Master Your Score

Understanding the JAMB marking scheme is no longer a “nice-to-know” detail, it is a serious competitive advantage. Over the years, I have watched brilliant students fail not because they lacked intelligence, but because they misunderstood how UTME scores are actually awarded, weighted, and interpreted. I’ve also seen average candidates outperform expectations simply because they wrote the exam with strategy, not assumptions.

Once you clearly understand how marks are calculated, scaled, and aggregated, everything changes. You stop wasting time on blind attempts, random guessing, and emotional panic. Instead, you begin to allocate time wisely, prioritize high-yield questions, and avoid costly mistakes that silently drain scores. At that point, UTME stops being a gamble and becomes a system you can work.

In today’s admission race, admission is rarely lost by large margins, it’s often lost by two or three misunderstood decisions. That’s why mastering the marking scheme can be the thin line between rejection and an offer letter.

For deeper clarity and practical application, ALSO READ: 10 Top JAMB Exam Tips to Score Above 250+. Read it carefully, apply it consistently, and approach JAMB like someone who understands the rules of the game not like someone hoping for luck.

Call to Action

If this guide helped you, share it with other candidates. Bookmark ExamGuideNG and explore our complete UTME preparation resources designed to help you score higher with clarity and confidence.

Authority Sources

- Joint Admissions and Matriculation Board

- Federal Ministry of Education Nigeria

- National Universities Commission

- West African Examinations Council

Written by Massodih Okon, Senior Exam Preparation Researcher and Academic Education Content Specialist with over 10 years of experience developing high-impact learning resources aligned with Nigerian and international examination standards. Reviewed and updated: January 2026. Based on official JAMB syllabus and verified exam data.

About the Author

Massodih Okon is an experienced educator, researcher, and digital publishing professional with a strong academic and practical background. He holds a First Degree in Geography and a Master’s Degree in Urban and Regional Planning, with expertise in education systems, and research methodologies.

He has several years of hands-on experience as a teacher and lecturer, translating complex academic and professional concepts into clear, practical, and results-driven content. Massodih is also a professional SEO content strategist and writer. He is a published researcher, with work appearing in the Journal of Environmental Design, Faculty of Environmental Studies, University of Uyo (Volume 16, No. 1, 2021), P. 127-134. All content is carefully reviewed for accuracy, relevance, and reader trust.

See our:

- Jamb Syllabus Explained Subject by Subject 2026 Complete Guide

- IELTS Exam Requirements for Professionals: Complete 2026 Guide

- JAMB Cut-Off Mark for Medicine in Nigeria (2026 Complete Guide)

- JAMB Literature Summary Notes: The Ultimate Master Guide for 2026 Candidates

- How to Do JAMB Change of Course or Institution (2026 Guide)

- Insurance vs Risk Management Salary in Nigeria and Abroad: Careers, Jobs & Professional Certifications

- JAMB Chemistry Topic Repetition Index (2016-2025): Data-Driven Exam Trend Analysis

- Germany Scholarships for International Students: The Ultimate 2026 Guide

- Cloud Computing Courses Online: The Complete Expert Guide For 2026

- Cost of Studying Medicine in Nigeria, USA, and UK With Scholarships